Ever since OpenAI launched ChatGPT the media’s attention has increased its already large coverage of everything related to Artificial Intelligence. Generative AI models require Prompt Engineering skills to make the most of them. And so, the hottest job and skillset was born.

But what is prompt engineering exactly, and why should you care about it?

Prompt Engineering is the practice of communicating with Generative AI models (such as ChatGPT) to obtain the desired output in an effective, efficient, and robust way. It strives to reduce the number of iterations necessary to achieve this goal and reuse as much as possible across similar use cases.

Prompt Engineering is essential because it helps save human time in routine tasks and provides enough leverage for users to create what before would have been too expensive and required too much skill. Those who use it will increase their productivity severalfold, and those who don’t risk losing their jobs. And in the case of organizations, this becomes even more of an existential issue.

The metric by which Prompt Engineering performance is measured is human time saved in obtaining an output that would satisfy the original requirements without compromising on quality or the impact of said output.

For those of you who like math, a formula would look something like this:

PE Performance = Quality change factor / Human time change factor

Where:

Quality change factor takes the value of 1 when the output of the model is at the same level as humans traditionally have generated for the same task; 0 when the output of the model is not good enough to be used in real life; any value in between for outputs that are useable but at a lower level than humans usually deliver; and above 1 if the quality of the output is above humans.

This could be a bit subjective, but we’ll show you an alternative that would be easier to track.

The human time change factor takes the value of 1 when working with AI takes the same time to generate the same amount of output. Higher values indicate a loss of productivity (which might be offset by the quality change factor), and lower values point to AI making the individual or organization more productive.

For instance, startups with a one-person social media team might only be able to produce content for one channel. She loves interacting with users that react to her content, and she knows that this part of her job is the most impactful. However, she barely has any time for real conversations due to having to write content first.

Then, this social media manager writes a prompt to transform a topic (let’s say Star Wars Day) into a series of related talking points.

She crafts a second prompt that takes information about the company and generates an article outline for each subtopic.

A third prompt takes each section of the outline and generates suitable paragraphs. After some refinement, the content matches the quality level she desires. Now, she can focus most of her time on conversing with users, which benefits the company and herself without hiring a content writer.

In this case, our social media manager turned prompt engineer was able to save about 80% of the time it usually takes her to complete a task she finds tedious, and she can quote that in her resume, which will come in handy if she decides to change jobs.

This time saving can be so significant that it allows individuals and organizations to do things they couldn’t do before. In these cases, there is no clear human-time-saving benchmark, and an estimate of the value provided by the output could be more helpful.

To accommodate this approach, the formula above changes to:

PE Performance = Impact change factor / Human time change factor

The Impact change factor works in the same way as in the previous formula but it’s more quantifiable and captures more of the value generated. This variable should reflect the impact these outputs have on the organization or individual benefiting from the use of the model. A common way to do this in businesses is by estimating the change in revenue or, even better, the profit that can be derived from the use of AI. This definition is useful because money is closely tracked in most organizations, so you can start tracking PE performance right away and avoid resorting to more subjective measures of quality or impact.

The AI’s impact will go beyond profit, however. In the long run, it will be critical for the organization to track the externalities of this process. A popular approach among publicly traded companies is to track the Environmental, Social, and corporate Governance (ESG) impact of their decisions. Although not the only one, this could be a good place to start. This requires coordination across the company and Prompt Engineers shouldn’t do it in isolation. External consultants are hired for this purpose routinely.

Continuing with the previous example, imagine that our social media manager decides to take it further and use a tweaked version of the prompts she crafted to generate content for multiple social media channels. Now, the company can reach an audience it previously couldn’t get to. Any sales from those new channels will accrue to our new social media prompt engineer’s work, which will look even better on her resume!

The scope of Prompt Engineering often goes beyond individual prompting sessions. Frequently, solving a problem requires connecting the Generative AI model with other tools allowing for non-linear workflows and less frequent human intervention.

To illustrate this point, think about the previous example. To pass the output of one prompt to the input of the next, our social media prompt engineer can use tools such as the GPT for sheets plugin. This Google Sheets plugin allows users to send prompts to an OpenAI Large Language Model using a formula from a cell and displaying the model’s answer. This formula can take the contents of other cells, creating a chain of inputs and outputs without using any code.

But where does Prompt Engineering even come from? In the next section, we will explore how Generative AI has developed in the last few years and how prompting started and became a more useful skill with time.

History of Prompt Engineering

We currently define prompts as any input we provide Generative AI with. It can be an instruction, an example, the beginning of a sentence, or not even be text at all. The fact that this definition is so broad reflects how much Generative AI and our understanding of working with it has evolved over time.

The first AI language models were trained to predict the next word in a sentence. More advanced models can also take direct instructions from the user. However, obtaining the desired output becomes more manageable if we give the model a clue of how we would like the output to start. We do this by simply typing the first few words of the desired output and leaving the model to complete the sentence or paragraph. This method is commonly known as zero-shot prompting; the few words you type are called a prompt. This meaning of the word prompt was initially restricted to just those few words after the instructions, but today it’s used in a much more general sense. Any verbal input the user provides is now called a prompt.

Soon, it was realized that in addition to the instructions and the prompt, it is helpful to provide input-output pair examples to increase the relevance of the model’s response. These input-output pairs are similar to those used during the models’ training. The revelation was that LLMs could continue to learn even after the training stage was complete, although the effects would only last for that particular interaction with the model.

This was the beginning of Prompt Engineering.

On the 14th of March 2023 (Pi Day), OpenAI released ChatGPT, a Generative AI language model trained for chat conversations. This model (GPT3.5-turbo) and its user interface allowed users multiple interactions in the same session, making each input and output available as context for the following prompt. Prompt Engineering moved from focusing on single prompts to considering any input and output generated during a prompting session within the model’s memory window.

While this conversational approach made OpenAI the Generative AI popularity winner overnight, this model and other non-conversational ones could still be dealt with on a prompt-by-prompt basis. This approach allows for more control over the prompting environment and prompt engineers that want to build reliable Generative AI pipelines generally prefer it.

This focus on reliability takes us to the final piece, for now, in the evolution of Prompt Engineering. Once models became good enough, prompt engineers’ focus shifted from making the model return something useful to creating standardized workflows flexible enough to accommodate variability in users’ requirements without them needing strong prompting skills.

Tools such as GPT for sheets that allow the execution of prompts from individual Google sheets prompts can do so dynamically (by reading the contents of other cells). Thanks to this behavior, prompt engineers can craft incomplete prompts, waiting for the output of the previous step in the process, which further enriches the final output.

This tool doesn’t require any coding skills, but if you have them, you can take things a step further by creating an application that you can share (or sell) to other users. Here the prompt engineer starts to take in the role of a product manager. Knowing what the final user wants to achieve with the tool and how they tend to ask for it will be critical to the app’s development.

If you don’t yet have coding skills, don’t worry. You can use ChatGPT to teach yourself how to code or even help you generate some of the necessary code. Just make sure you understand the code you are adding to your app.

Sometimes this development will require connecting the Generative AI models to other already existing tools. Zapier has been providing no-code app integrations since before OpenAI released its APIs and was one of the first providers to connect to it. If you can code, you will save on fees by connecting without intermediaries, giving you more control and making the workflow more reliable. Yet another way to create these integrations is by using apps inside AI platforms, such as Hugging Face or GPT4’s plugin store, available for ChatGPT plus subscribers without coding requirements.

Prompt Engineering: A New Career?

What Does A Prompt Engineer Do?

As you can see, the scope of what is prompt engineering varies wildly depending on your aspirations and skills. But in all cases, you are the nexus between the human-generated requirements and the machine that will serve those demands.

As such, gaining clarity on what you or your organization needs and evaluating how well the output fits those demands will be the most valuable part of your job, together with speed.

Especially when you are working full-time as a prompt engineer, having consulting skills will be extremely useful. You will need to extract requirements from stakeholders, translate them into sensible prompts and build a workflow if it’s going to be a recurrent request, and finally present the result of your work to those stakeholders.

Chances are you won’t get this right the first time around, so it’s healthier for you and the organization to set the expectation that this will be a cyclical process with multiple iterations during which they, the stakeholders, will be able to provide input and feedback to guarantee that what you create will actually be used in the business.

If you enjoy communicating, you can also teach basic self-serving prompting skills to your colleagues. This will reduce the number of ad-hoc requests you receive from stakeholders, allowing you to focus on the more business-critical opportunities. This will also raise your profile even further, showing that you are not only capable of solving problems but also proactive in helping others.

Because you will automate many processes, an alliance with software and data engineers will be strategically important. Instead of competing with them, work together. They have a good understanding of the internal processes, what has already been automated, and what they wish they could but haven’t been able to without Generative AI. Making them part of your projects will be great for their career because of the technology you will be showing them and for yours because of the project management experience you will gain.

How much does a prompt engineer make?

The more value you provide to the final user or your organization, the higher the salary you can command. But you already know that. So, what are some real examples of job pay in prompt engineering?

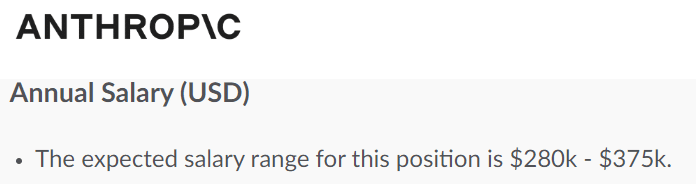

Prompt Engineer salaries working for AI companies

One of the sectors with the highest demand for Prompt Engineers is Generative AI organizations. The completion among them is high as the most successful models and tools will take a huge market share. This thirst for talent creates job ads like this from Anthropic.

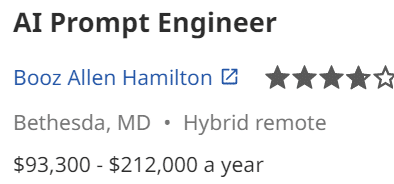

Prompt Engineer salaries working for consultancies

Another sector driving demand for Prompt Engineers is consultancies. Businesses know that Generative AI can have an immense impact on their bottom line but often are unsure about how to implement this new technology. Consultancies bridge the gap for which they need to hire more and more Prompt Engineers, giving us employment opportunities like the one below.

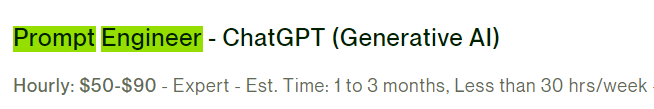

Prompt Engineer pay for freelance work

If full-time employment is not for you, don’t worry. Freelance work in this field is available too. A quick search in Upwork allowed us to find gigs like this.

Now, does that mean that these salaries and rates are the norm? No, not at all. But you can expect a baseline salary in the US to be half of those we’ve covered in this section. If the company is based outside the US and similarly wealthy countries, halve that estimate again. That second halving is already embedded in the freelance rate above.

Could prompt engineering not be the job of the future?

Even though Prompt Engineering is an extremely recent field, the barrier to entry is relatively low. Natural language is less intimidating than writing code or data scientists’ math.

A wave of tech employees was laid off after the pandemic, and another one is coming due to implementing Generative AI in the workplace. These two factors combined make it likely that we will have a surge of supply and hence competition in the prompt engineering space, which will drive up the requirements to get a job in this field.

The good news is that good prompt engineers can provide such a massive value to their employers that many organizations will fight to get at least one in their teams. This will be particularly true in startups. They require funding to grow. Growth expectations will massively impact the valuation of the company. This, in turn, sets how much financing the startup can get.

In addition to prompt engineers (by job title), office workers use Generative AI for routine tasks and special projects. Prompt Engineering is becoming a general-purpose tool that will make employees more productive and likely to advance in their careers. Those that don’t adapt to this new technology will stagnate and risk losing their job to those who do.

If none of those paths appeal to you because you don’t see yourself working for an employer in the long run, Prompt Engineering can still completely change your life for the better. Generative AI tools can help you automate or massively reduce the time required to complete your day-to-day tasks at work. If you feel secure in your job and don’t want to chase another, you could just get your job done in 20% of the time and use the remaining for personal tasks or developing a side gig or business, as Tim Ferris suggests in the 4-hour workweek. Just remember to check the ethical and legal implications of this strategy. You can ask ChatGPT for it but don’t let it be your only advisor. Lawyers are still valuable in the age of Generative AI.

So the question we should be asking ourselves is not whether Prompt Engineering will be the job of the future but how much you will lean into it to meet your goals.

How to become a prompt engineer: first steps

So after reading this far, you have decided to become a prompt engineer. What’s next?!

Just as in other jobs in tech, it pays to solve problems and specialize. Instead of taking a course first, choose a problem you want to solve or a solution you want to build with Generative AI. The task should ideally have personal relevance and commercial impact. That way, you can discuss in an interview why you chose that problem and why the employer should care about it. If you already know what industry or company you want to work in, select something related.

Now that you have a mission find any resources you may need to accomplish it. If you are taking a self-paced course, take only bits and pieces that will help you achieve your goal. At this initial stage, it is critical to be pragmatic. Don’t aim yet to know all the technical stuff. Just focus on solving your problem. In the process, you will learn a lot in a way that you can retrieve from your brain easier for future projects and interviews. Not only that, but you are at the same time creating a project portfolio to show to potential employers.

After you complete your first project, remember to document what you did for future reference.

Next, rinse and repeat. Complete two or three more projects following the same method. These projects should ideally cover different but related areas even better if the projects integrate with each other. As you become more comfortable with the technology, increase the complexity of your projects, use APIs, create workflows, automate, and create a user interface if you have the skills.

Now that you can do the job, it’s time to fill the gaps. Take a course that touches on how the models you will be using work from a machine-learning perspective. Will you be using this in your day-to-day job? Rarely, only when coming up with hypotheses about why your prompt doesn’t work. However, it will give you a competitive advantage when interviewing.

Prompt Engineering is the practice of crafting messages for Generative AI models statically or dynamically, with one or many prompts, in isolation or integrating with other tools in such a way that would generate value for the final user or organization commissioning it.

How much Prompt Engineering you incorporate in your job depends on your ambition and competition in your field. You can also become a full-time prompt engineer, in which case learning a programming language and the background story of the models you are developing will help you in the interviews. But remember to learn Prompt Engineering by solving real problems, not just going through theory.

A formidable prompt engineer, Dan Sanz blends a deep understanding of AI with practical insights into human-machine interaction. His work in prompt engineering in combination with years in the field of Data Science, are helping to redefine the possibilities for AI communication and engagement.